Redefining DevOps: People, Process, Tools, and Agents

The Definition Worked. Until a Fourth Participant Showed Up.

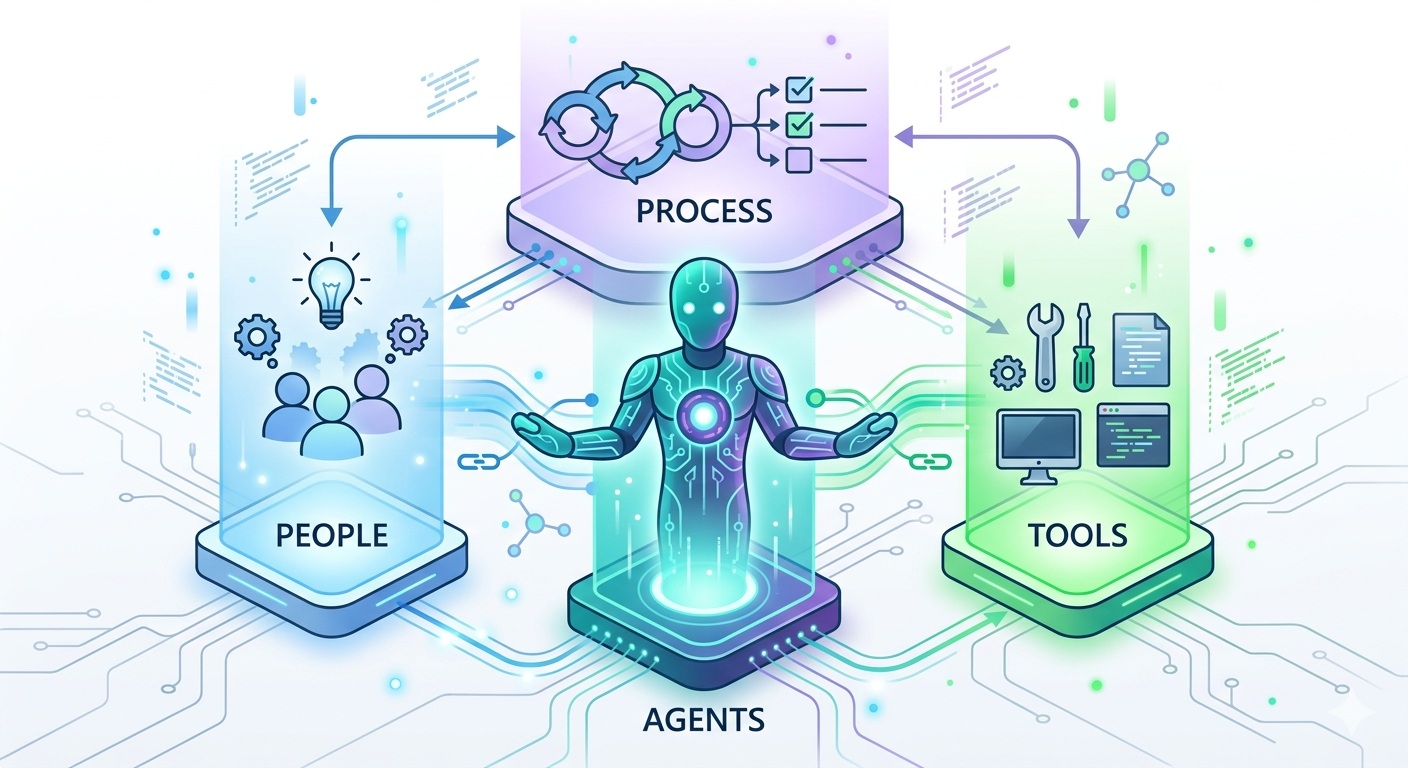

DevOps has always been defined by a simple, powerful equation: People + Process + Tools. That formula captured something essential about how modern software gets built and delivered. It broke down walls between development and operations. It gave organizations a mental model for diagnosing what was wrong when things moved too slowly, failed too often, or created too much friction.

For over a decade, this three-pillar model served the industry well. And it did so because it rested on an assumption that nobody questioned: every participant in the software delivery lifecycle was human.

That assumption no longer holds.

AI agents are now writing code, opening pull requests, triaging incidents, proposing infrastructure changes, generating documentation, and coordinating multi-step workflows across repositories. They are not occasional guests in the delivery lifecycle. They are becoming regular participants.

In previous posts, I explored the tactical dimensions of this shift: how DevOps foundations prepare systems for agents, how CI/CD pipelines must evolve, how to build and govern an AI agent team, and how the engineering role is changing. Those posts are specific and practical. This post is the conceptual framework that ties them together.

The argument is straightforward: the DevOps equation needs a fourth term. Not because the first three are wrong, but because they are incomplete. The updated model is People + Process + Tools + Agents, and understanding what changes at each layer is essential for any organization that wants to benefit from AI agents rather than be destabilized by them.

The Original Equation and Why It Worked

Before proposing changes, it is worth understanding why "People + Process + Tools" became the dominant framework in the first place.

DevOps emerged as a response to a specific problem: development teams and operations teams working in isolation, with conflicting incentives, different tools, and no shared accountability for outcomes. Developers optimized for shipping features. Operations optimized for stability. The result was friction, blame, and slow delivery.

The three-pillar model addressed this by making each dimension explicit:

People represented the cultural shift. Shared ownership. Blameless postmortems. Cross-functional teams. The recognition that organizational structure and incentives matter as much as technical architecture.

Process represented the operational discipline. Continuous integration. Continuous delivery. Automated testing. Change management. Feedback loops that connect what is deployed to what is observed in production.

Tools represented the technical enablers. Version control systems, CI/CD platforms, monitoring infrastructure, infrastructure-as-code frameworks, container orchestrators. The practical machinery that makes the cultural and process changes possible.

This model worked because all three pillars assumed the same type of participant: a human being who understands context, exercises judgment, communicates with teammates, and takes responsibility for outcomes. The entire DevOps movement was built around making humans more effective at delivering software together.

Every process was designed for human speed, human error patterns, and human decision-making. Every tool was designed with a human user in mind. Every cultural norm assumed that the people involved could be reasoned with, coached, and held accountable in the ways that human organizations function.

That assumption was never stated explicitly, because it never needed to be.

The Fourth Pillar: Agents

AI agents are not simply better tools. This is the single most important distinction in the entire post, and it is the one that most organizations get wrong.

Tools are passive. A CI/CD pipeline does exactly what its configuration tells it to do. A linter checks exactly the rules it has been given. A deployment script runs exactly the steps defined in it. Tools have no initiative, no interpretation, no autonomy. They are deterministic instruments operated by humans.

Agents are different. An agent receives an intent, interprets it, makes decisions about how to fulfill it, generates artifacts, and interacts with other systems. When you assign a task to the GitHub Copilot coding agent, it does not execute a predefined script. It reads the repository, understands the context, decides which files to modify, writes code, creates tests, and opens a pull request. It makes choices along the way that a human did not explicitly specify.

This distinction matters because it fundamentally changes three models that underpin how organizations deliver software:

| Model | With Tools Only | With Agents |

|---|---|---|

| Trust | Humans trust tools to execute correctly. Verification is mechanical. | Humans must trust agents to interpret correctly. Verification requires judgment about judgment. |

| Accountability | The human who invoked the tool is accountable for the outcome. Clear chain. | A human authorized the agent, but the agent made specific decisions. Accountability requires attribution trails. |

| Governance | Governed by access controls and pipeline rules. | Requires scoped permissions, constitutional guardrails, and delegation protocols. |

Agents sit in a new position in the organizational model: between people and tools. They have more autonomy than tools but less judgment than people. They can operate at tool-like speed but produce people-like artifacts (code, documentation, architectural proposals). They interact with the tool layer directly but require governance structures that look more like the ones we build for people.

Treating agents as tools means under-governing them. Treating agents as people means over-trusting them. Neither works. They need their own pillar.

How "People" Changes When Agents Join

When agents handle more of the execution work, the human role does not shrink. It shifts. Engineers become less like assembly-line workers and more like coaches, reviewers, and governors.

From Executor to Orchestrator

The traditional engineering workflow was: receive a task, design a solution, implement it, test it, submit it for review, address feedback, ship it. The engineer was the primary executor at every step.

With agents in the loop, the workflow becomes: define the intent clearly, configure the agent's scope and constraints, delegate the task, review the output, verify it meets requirements, approve or reject, iterate. The engineer's value shifts from writing code to ensuring the right code gets written.

This is not a minor adjustment. It requires genuinely different skills:

| Traditional Skill | Emerging Skill |

|---|---|

| Writing code from scratch | Writing specifications that agents can interpret unambiguously |

| Debugging by reading code line by line | Evaluating agent output against intent and architectural patterns |

| Manual testing and exploration | Designing verification criteria and automated validation |

| Code review for correctness | Code review for alignment with intent, security posture, and architectural fit |

| Learning new frameworks | Learning how to configure, constrain, and guide agents effectively |

I explored the specification skill in depth in From Prompts to Specifications. The core insight is that communicating intent to agents requires structured, versioned, testable specifications, not ad hoc prompts. Engineers who master this skill amplify their impact significantly.

The Trust Calibration Challenge

The hardest cultural shift is trust calibration. Agent-generated code compiles, passes tests, and often looks indistinguishable from human-written code. The temptation is to treat it as inherently trustworthy because it looks correct and the machine seems confident.

This is dangerous. Agents can produce code that is syntactically perfect but semantically wrong: correct implementation of the wrong behavior, architecturally misaligned solutions, or subtle security issues that no automated check catches.

As I discussed in Humans and Agents: Collaboration Patterns, effective collaboration requires that humans maintain a healthy skepticism calibrated to the risk of the change. A cosmetic UI tweak warrants lighter scrutiny than a change to authentication logic, regardless of whether a human or an agent produced it.

New Cultural Norms

Teams need to develop new norms:

- Agent output is always reviewed. Not rubber-stamped. Reviewed with the same rigor applied to code from a new team member.

- Delegation is explicit. When an agent is assigned a task, there is a clear record of who authorized it, what scope was given, and what constraints were set.

- Skill development shifts. Training budgets and professional development programs should include specification design, agent configuration, and output validation, not just programming languages and frameworks.

How "Process" Changes When Agents Join

Processes designed for human contributors assumed certain characteristics: humans work at a certain pace, make certain types of mistakes, communicate through certain channels, and can be held accountable through organizational structures. Agents break many of these assumptions.

Speed and Volume

Humans produce code at a rate constrained by thinking, typing, context-switching, meetings, and biological needs. Agents produce code at a rate constrained by API latency and compute availability. A single agent can generate dozens of pull requests in the time a human produces one.

This is not merely faster. It is qualitatively different. Processes that worked when a team produced ten pull requests a day need to function when agents add fifty more. Review processes, merge strategies, testing infrastructure, and deployment pipelines all face throughput pressure that existing processes were not designed for.

Different Failure Modes

Human errors tend to be recognizable: typos, logic mistakes, forgotten edge cases, copy-paste artifacts. Peer reviewers have decades of pattern recognition for these failure modes.

Agent errors look different. They are often confidently wrong: syntactically correct code that implements the wrong behavior, architecturally plausible solutions that violate unstated conventions, or security-adjacent code that passes automated scans but introduces subtle vulnerabilities. As I explored in CI/CD Pipelines for the Agentic Era, verification strategies must evolve to catch these failure modes.

New Process Layers

Processes need several new layers that did not exist in the human-only model:

Delegation protocols. Before an agent acts, there should be a clear protocol: who authorized the agent to work on this task? What specification or instruction governed its behavior? What scope boundaries constrained it? Without delegation protocols, you get autonomous agents making changes that nobody requested or approved.

Attribution tracking. Every artifact an agent produces needs to be traceable to the human or system that initiated the action. This is not just for accountability. It is for debugging, auditing, and understanding why a particular decision was made. When an incident occurs in production, "an agent changed this file" is not sufficient. You need to know which agent, under what instructions, authorized by whom, and reviewed by whom.

Scope boundaries. Agents should have explicit boundaries on what they can touch. An agent tasked with updating dependencies should not be able to modify authentication logic. An agent generating documentation should not be able to alter production infrastructure code. These boundaries need to be encoded in the agent's configuration and enforced by the toolchain.

Verification gates. Beyond traditional "does it compile and pass tests" checks, processes need verification gates that validate agent output against the specification or intent that triggered it. Did the agent actually do what was asked? Did it stay within its authorized scope? Did it introduce patterns that conflict with the repository's architectural conventions?

How "Tools" Changes When Agents Join

The tool layer has always been the most tangible pillar of DevOps. Version control, CI/CD platforms, monitoring systems, infrastructure-as-code frameworks. These tools were designed with human operators in mind: dashboards for human eyes, CLIs for human hands, notifications for human attention.

When agents become active participants, the tool layer must become agent-aware.

Repositories as Shared Workspaces

A repository is no longer just a place where humans store and collaborate on code. It is a shared workspace where both humans and agents operate. This means repositories need structural elements that agents can interpret:

- Instruction files (

.github/copilot-instructions.md,.github/instructions/) that encode project conventions, coding standards, and architectural constraints in a format agents can consume - Specification directories (

specs/) where structured intent documents live, providing agents with clear requirements rather than ambiguous prompts - Agent configurations (

.github/agents/) that define specialized agent personas with scoped expertise and boundaries - Constitutional documents (

.specify/memory/constitution.md) that establish non-negotiable principles all agents must follow

I described this repository architecture in Building Your AI Agent Team and Designing Software for an Agent-First World. The key insight is that repository structure is no longer just an organizational convenience. It is an interface that both humans and agents rely on, and its quality directly affects the quality of agent output.

CI/CD Pipelines as Trust Infrastructure

As I discussed in detail in CI/CD Pipelines for the Agentic Era, pipelines must evolve from simple gatekeepers to comprehensive verification systems. But the shift goes beyond pipeline configuration. The fundamental role of the CI/CD platform changes.

In the human-only model, CI/CD was a quality accelerator. It caught human mistakes faster than manual processes could.

In the agents-included model, CI/CD becomes the primary trust mechanism. It is the system that validates whether agent output meets the standards the organization requires. Think of it as the last line of defense between an agent's autonomous decisions and production.

This means CI/CD tools need new capabilities:

| Capability | Purpose |

|---|---|

| Provenance tracking | Know whether a commit came from a human or an agent, and which agent |

| Scope validation | Verify that changes stay within the boundaries the agent was authorized to operate in |

| Specification matching | Check that the implementation matches the spec that triggered the work |

| Hallucination detection | Catch references to non-existent APIs, packages, or patterns |

| Differential scrutiny | Apply different verification depths based on the source and risk profile of the change |

Observability That Includes Agent Actions

Monitoring and observability systems were designed to track application behavior and infrastructure state. When agents are active in the delivery lifecycle, observability needs to extend to agent behavior.

How many pull requests did agents open today? What was their merge rate versus rejection rate? Which agent-generated changes caused incidents? How much of the codebase was written by agents versus humans? Are agents drifting from their configured behavior over time?

These questions are not academic. They are operational necessities. Without observability over agent behavior, teams are flying blind in a system where a growing percentage of changes are made by non-human actors.

The New Trust Equation

In the original DevOps model, trust was primarily a cultural construct. Teams built trust through shared responsibility, blameless postmortems, transparent communication, and incremental proof that the system worked. This was fundamentally interpersonal: humans learning to trust other humans across organizational boundaries.

When agents enter the loop, trust must be extended to non-human participants that cannot be coached, cannot participate in postmortems, and cannot internalize organizational values in the way humans do. Trust must be constructed through mechanisms rather than relationships.

The new DevOps trust equation can be expressed as:

Trust = Strong Foundations x Clear Delegation x Automated Verification x Human Oversight at Decision Points

Each factor is multiplicative, not additive. If any factor is zero, trust collapses:

Strong Foundations. The DevOps basics: version control, automated testing, CI/CD, monitoring, security scanning. Without these, agents amplify chaos. With them, agents amplify capability. This is the premise of Agentic Software Engineering Needs Strong DevOps Foundations.

Clear Delegation. Every agent action should be traceable to a human-authorized scope. Who asked the agent to act? What specification governed the work? What boundaries restricted the agent's autonomy? Delegation without clarity is abdication.

Automated Verification. Agent output must pass through automated checks that go beyond "does it compile." Specification compliance, scope validation, architectural conformance, security analysis, and hallucination detection. The pipeline is the objective truth.

Human Oversight at Decision Points. Not every action, but every consequential decision. Deployment approvals, security-related changes, architectural shifts, dependency additions. Humans retain authority where judgment is irreplaceable.

This equation is practical, not theoretical. Every organization can evaluate its current position on each factor and identify where the weakest link exists. Most will find that delegation and verification are the least mature, because those capabilities were not needed when all participants were human.

A Maturity Model for People + Process + Tools + Agents

Not every team is ready for full agent integration, and that is fine. Progress happens in stages. Understanding where your team sits, and what is required to advance, helps set realistic expectations and prioritize investments.

Stage 1: Assistance

Agents help with discrete, well-bounded tasks within existing processes. Developers use AI coding assistants for autocomplete, inline suggestions, and chat-based Q&A. Agents work entirely within the developer's session. No autonomous pull requests, no multi-step workflows, no delegation.

What characterizes this stage:

- Agents are tools within the IDE, not independent actors

- All output is immediately reviewed and modified by the developer

- Existing processes, pipelines, and governance structures are unchanged

- Trust is not an issue because the human is always in the loop

What most teams get right at this stage: Quick adoption and measurable productivity gains for individual tasks.

What most teams miss: Assuming this is the end state. Assistance is the beginning, not the ceiling.

Stage 2: Augmentation

Agents manage multi-step workflows with human oversight at key checkpoints. The GitHub Copilot coding agent receives a task, works on a branch autonomously, and opens a pull request for review. Agents generate not just code but tests, documentation, and sometimes infrastructure changes. Delegation is explicit. Review is mandatory.

What characterizes this stage:

- Agents operate outside the developer's immediate session

- Pull requests from agents are reviewed with the same rigor as human contributions

- Specification-driven development emerges as a practice (see From Prompts to Specifications)

- CI/CD pipelines begin to differentiate between human and agent contributions

- Agent configurations and instruction files are version-controlled

What most teams get right at this stage: Setting up initial agent workflows and experiencing significant productivity gains for routine tasks.

What most teams miss: Underinvesting in verification, attribution, and scope controls. The temptation is to trust agent output because it compiles and tests pass. Teams at this stage need to actively build the governance muscle that Stage 3 requires.

Stage 3: Orchestration

Agents coordinate with each other and with humans across the full lifecycle. Multiple specialized agents handle different aspects of the delivery process: one agent writes code from specifications, another reviews it, another updates documentation, another proposes infrastructure changes. Agents operate within constitutional guardrails, scoped skill profiles, and automated verification. Humans govern the system rather than performing individual tasks.

What characterizes this stage:

- Multi-agent coordination with clear role separation

- Constitutional governance documents define non-negotiable boundaries

- Automated specification compliance verification

- Full provenance tracking for all agent actions

- Observability dashboards that include agent metrics

- Humans function primarily as governors, architects, and exception handlers

What most teams get right at this stage: The teams that reach this stage generally have strong engineering cultures and mature DevOps practices to begin with.

What most teams miss: Overcomplicating the orchestration layer. The best multi-agent systems are composed of simple, well-scoped agents with clear boundaries, not one omniscient agent trying to do everything.

Where Most Teams Are Today

Most organizations are somewhere between Stage 1 and Stage 2. They have adopted AI coding assistants and are beginning to experiment with autonomous agent workflows. The gap between Stage 2 and Stage 3 is significant and requires investment in governance, verification, and observability infrastructure that most teams have not yet begun building.

The path forward is incremental. Each stage builds on the previous one. Trying to jump directly from Assistance to Orchestration without building the governance and verification layers of Augmentation is a recipe for incidents, technical debt, and eroded trust.

What Doesn't Change

With all the emphasis on what is new, it is important to be clear about what endures.

DevOps was never really about tools. It was about culture, collaboration, and feedback loops. The infinite loop of Plan, Code, Build, Test, Release, Deploy, Operate, Monitor still applies. Every principle that made DevOps valuable, shared ownership, continuous improvement, fast feedback, learning from failure, remains essential.

What changes is who (or what) performs each step and how accountability flows.

Consider this mapping of the traditional DevOps practices to the four-pillar model:

| DevOps Principle | Still Applies? | What Changes |

|---|---|---|

| Shared ownership | Yes | Ownership now extends to governing agent behavior and output |

| Continuous integration | Yes | CI must handle higher volume and distinguish contributor types |

| Automated testing | Yes | Tests must validate agent output against specifications, not just functionality |

| Infrastructure as code | Yes | IaC templates become inputs that agents can modify, requiring tighter review |

| Monitoring and observability | Yes | Observability must include agent activity and decision tracking |

| Blameless postmortems | Yes | Postmortems must trace agent decisions and the delegations that authorized them |

| Continuous improvement | Yes | Improvement now includes refining agent configurations, specifications, and governance |

The core insight is that agents amplify whatever culture you already have. Strong cultures with mature practices, clear accountability, and genuine commitment to quality will find that agents make them even stronger. Teams with weak foundations, unclear ownership, missing tests, and fragile pipelines will find that agents scale their weaknesses faster than their strengths.

This is not a hypothetical warning. It is the lived experience of every team that has introduced agents without first investing in the foundations. Agents do not fix broken processes. They execute broken processes at scale.

The Fourth Member

The industry is moving from DevOps to what some call "AgentOps" or "Agentic DevOps." The label matters less than the recognition that software delivery now has a fourth type of participant.

For ten years, the DevOps community worked to bring developers and operations together. That work was about bridging a gap between two groups of people who shared the same goal but worked in isolation. The union of People, Process, and Tools was the framework for bridging that gap.

The next decade will be about integrating a fundamentally new kind of participant: one that operates at machine speed, produces human-readable artifacts, has more autonomy than any tool that came before, but lacks the judgment, context, and values that humans bring.

Organizations that recognize this shift and update their people, processes, and tools accordingly will lead. They will deliver faster, with fewer defects, and with better security posture, because they will have harnessed the strengths of agents while containing their limitations.

Organizations that try to squeeze agents into a human-only framework will discover an uncomfortable truth: agents scale weaknesses faster than strengths. Without clear delegation, agents act without authorization. Without automated verification, agent errors reach production unchecked. Without human oversight at decision points, consequential choices get made by systems that optimize for the letter of the prompt rather than the spirit of the intent.

The equation has four terms now. Build for all of them.